Last summer, facing down the rapidly approaching fall semester, I realized it was probably time to accept that I had to start thinking about “Artificial Intelligence.” I put together an AI reading list, and soon I was all too aware of the economic and environmental risks of large language models and their ilk, the drawbacks of overreliance on a new and unproven technology, the depressing-if-speculative cognitive effects of outsourcing thought and reasoning to machines, and of course the threat of my institution trying to replace me with some kind of TA chatbot. But what was I actually supposed to do about it? After reading more and talking to colleagues, I landed on an imperfect but practical idea: I would make each student a booklet, and we would practice writing in class.

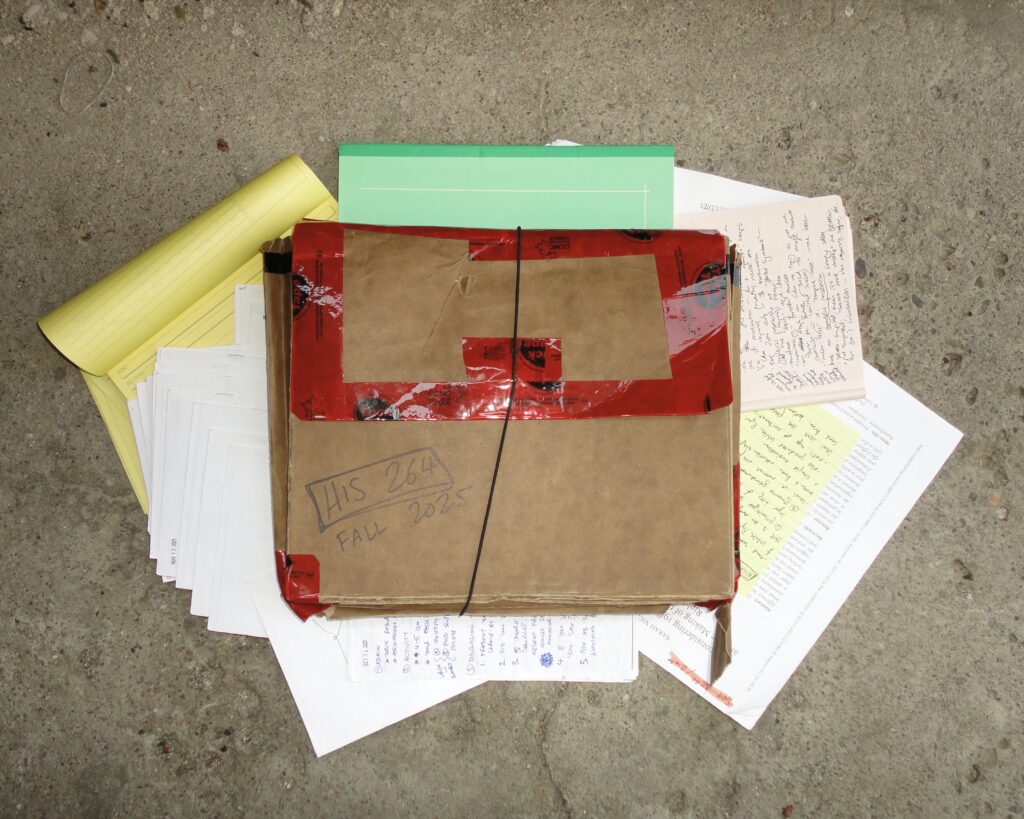

I made 80 booklets by folding sheets of paper in half widthwise and stapling them together with a long-reach stapler. I bought a large portable accordion folder to put them in (my supervisor took to calling this “The Penske File,” after Seinfeld). Every week I lugged this folder to and from my tutorials, distributed the booklets at the beginning and collected them at the end. I asked the students to write their names on the front of their booklets, and during tutorial discussion they would prop them up in front of them as nametags. The rest of the pages were for the writing exercises.

These writing exercises were essentially “exit tickets” — a small amount of written work completed during each tutorial, right before the end. Once or twice I asked them to work on and compare their answers in small groups; this was particularly helpful for weeks I suspected a fair number of students hadn’t even glanced at the readings. Most weeks I tried to pair the exercise with a “history skill.” One week, I gave them tips on “reading strategically” (what scholars sometimes call “gutting,” or reading quickly for essential information only), handed them two paragraphs of a scholarly article that was topical but that they hadn’t been assigned, and asked them to quickly summarize. Another exercise involved citation skills. We talked about when to cite — I showed excerpts from that week’s readings on the projector and asked them why they thought this or that footnote was at the end of this or that sentence — and then gave them an excerpt from an academic text with the citations removed and asked them to add them back in. Occasionally, the writing exercises served as scaffolding for tests and assignments; asking students to write down which essay topic they were thinking of choosing was a good way to get them to start thinking about essay options rather than later.

My pitch to the students was that the booklets would 1) teach them each others’ names 2) help them learn historical skills and 3) help them practice physically writing with their hands to prepare for handwritten tests. I’d like to think that this experiment succeeded on the first and third points; in any case it would be hard to find evidence that it made them worse at learning each others’ names or writing by hand. I can also say that I found it helpful to practice reading their handwriting a bit each week. Did they help students learn historical skills? Maybe, maybe not. Some of the students definitely learned something, and when I circulated a brief and anonymous online survey at the end of the term twenty students (about a quarter of the total enrolled in my tutorials) at least humoured me enough to say they found it helpful.

My ulterior motive, of course, was to provide some counterprogramming to large language models. Following articles I’d read on pedagogy in the age of AI, I dreamt of a way to show students that they could use their own brains rather than relying on new technological tools. I wanted to give them confidence in their own abilities and enjoyment in using them. Although the booklets (designed by me) were not directly related to the final essay (designed by the professor), I hoped that these skills would transfer over and students would avoid AI in their final assignments. While there is a part of me that embraces a Luddite philosophy — I occasionally use a flip phone instead of a smartphone, and have refused to introduce AI into any aspect of my life — my goal here was less idealistic and more practical. I worry that if students outsource any part of the writing process to AI, they won’t learn the way we intend them to. Just as taking handwritten notes forces the brain to integrate new concepts in real time, resulting in better understanding and retention, I believe that the traditional essay-writing method — the slow and often frustrating process of coming up with an idea, searching through material, and putting one’s ideas into words — is what teaches our students to think. I gave my students the analogy of learning to lift weights: while it’s unlikely that I’ll be suddenly asked to bench or deadlift in the middle of the street, the muscles I build in the gym help me carry my groceries and lift my luggage into the overhead bin.

On this front, the results were more mixed. When I sat down to mark the only assignment for the course that students submitted online, I was dismayed to realize that many students had clearly used LLMs in some form. Besides a suspicious lack of truly bad papers, much of the language was oddly corporate and smoothed-over, populated with tells like “it’s not just X and Y — it’s Z” and an overemphasis on anything that was repeated throughout the assignment prompt. There was no way to know for sure that they’d been using AI — I am aware of the research that shows educators are not as good at spotting it as we think we are — but I was not drawing a conclusion based on no evidence. I suspect there was a spectrum of AI use, ranging from wholesale algorithmic generation to the use of “proofreading” tools to refine text. Whatever they’d done, I found it immensely depressing. These were often students who had done a perfectly respectable job on in-class assessments, who were more than capable of producing essays entirely on their own. Even a mediocre essay written by a human seemed more valuable than whatever these were. I ended the semester feeling distinctly depressed.

Despite the discouraging end to the semester, I would repeat the booklet experiment. Besides its luddite ethos, it was a useful pedagogical tool on its own; the first- and second-year students I teach have a range of backgrounds, and as an educator I want to prepare them as best I can for the demands of a university-level history course. These skills, I hope, will help them not only in the course for which I’m a TA but also for other courses they might take across different disciplines. In the future, I would probably make some adjustments. The most obvious and also the most complicated, as a Teaching Assistant rather than a course instructor, would be to make the booklets worth more of their course grade; incorporating them into the participation mark risks making students, often motivated by grades, feel like they’re doing a lot of work for a relatively small reward. I might also incorporate peer evaluation; this would allow students to think about their work in relation to others’ and affirm the interpersonal nature of education. Another strategy might be to make the booklets more explicitly linked to the final essay, so that students would do crucial preparation without the use of AI. I’ve also thought about ways to make it a bit more creative: what if I called the booklets “zines,” used stickers, or encouraged students to make the nameplate portion more colourful, if they wanted? Whatever I do in the future, I don’t want to give up — I still feel it’s important to try to be the best educators we can, even as conditions become increasingly difficult.

Madeleine Link is a PhD Candidate in the Department of History at the University of Toronto